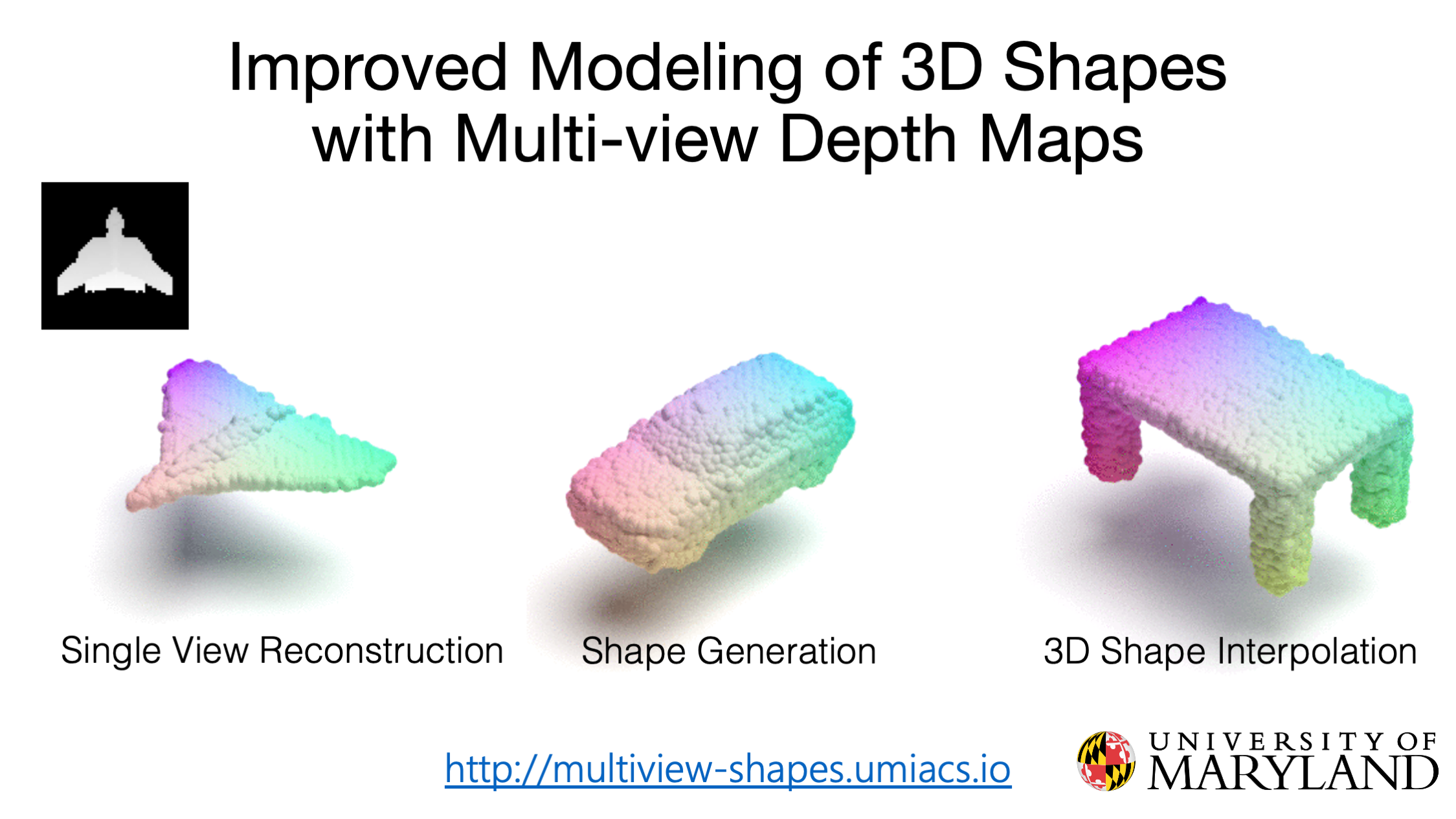

Improved Modeling of 3D Shapes with Multi-view Depth Maps

3DV 2020

1University of Maryland, College Park

2John Hopkins University

A novel encoder-decoder generative model for 3D shapes using multi-view depth maps; SOTA results on single view reconstruction and generation.

Abstract

We present a simple yet effective general-purpose framework for modeling 3D shapes by leveraging recent advances in 2D image generation using CNNs.

Using just a single depth image of the object, we can output a dense multi-view depth map representation of 3D objects.

Our simple encoder-decoder framework, comprised of a novel identity encoder and class-conditional viewpoint generator, generates 3D consistent depth maps.

Our experimental results demonstrate the two-fold advantage of our approach.

First, we can directly borrow architectures that work well in the 2D image domain to 3D.

Second, we can effectively generate high-resolution 3D shapes with low computational memory.

Our quantitative evaluations show that our method is superior to existing depth map methods for reconstructing and synthesizing 3D objects and is competitive with other representations, such as point clouds, voxel grids, and implicit functions.

Video

Cite

@inproceedings{gupta2020improved,

title={Improved Modeling of 3D Shapes with Multi-view Depth Maps},

author={Gupta, Kamal and Jabbireddy, Susmija and Shah, Ketul and Shrivastava, Abhinav and Zwicker, Matthias},

booktitle={International Conference on 3D Vision},

year={2020},

url={http://multiview-shapes.umiacs.io},

}

Last updated on October 12, 2020